Activation Functions

Activation Functions

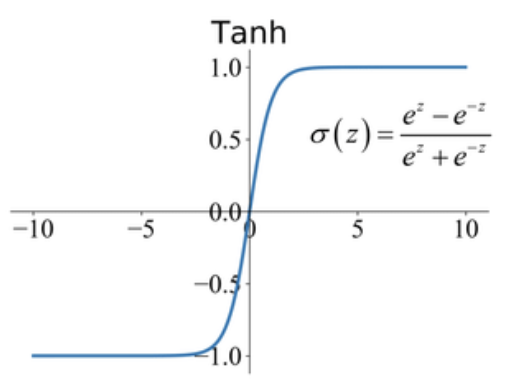

Tanh Activation

Tanh Activation is an activation function used for neural networks:

$$f\left(x\right) = \frac{e^{x} - e^{-x}}{e^{x} + e^{-x}}$$

Historically, the tanh function became preferred over the sigmoid function as it gave better performance for multi-layer neural networks. But it did not solve the vanishing gradient problem that sigmoids suffered, which was tackled more effectively with the introduction of ReLU activations.

Image Source: Junxi Feng

Papers

| Paper | Code | Results | Date | Stars |

|---|

Tasks

| Task | Papers | Share |

|---|---|---|

| Decoder | 24 | 3.39% |

| Language Modelling | 21 | 2.97% |

| Sentence | 20 | 2.82% |

| Time Series Forecasting | 19 | 2.68% |

| Management | 16 | 2.26% |

| Sentiment Analysis | 16 | 2.26% |

| Decision Making | 15 | 2.12% |

| Image Generation | 14 | 1.98% |

| Classification | 13 | 1.84% |

Usage Over Time

Components

| Component | Type |

|

|---|---|---|

| 🤖 No Components Found | You can add them if they exist; e.g. Mask R-CNN uses RoIAlign |