Adaptive Primal-Dual Hybrid Gradient Methods for Saddle-Point Problems

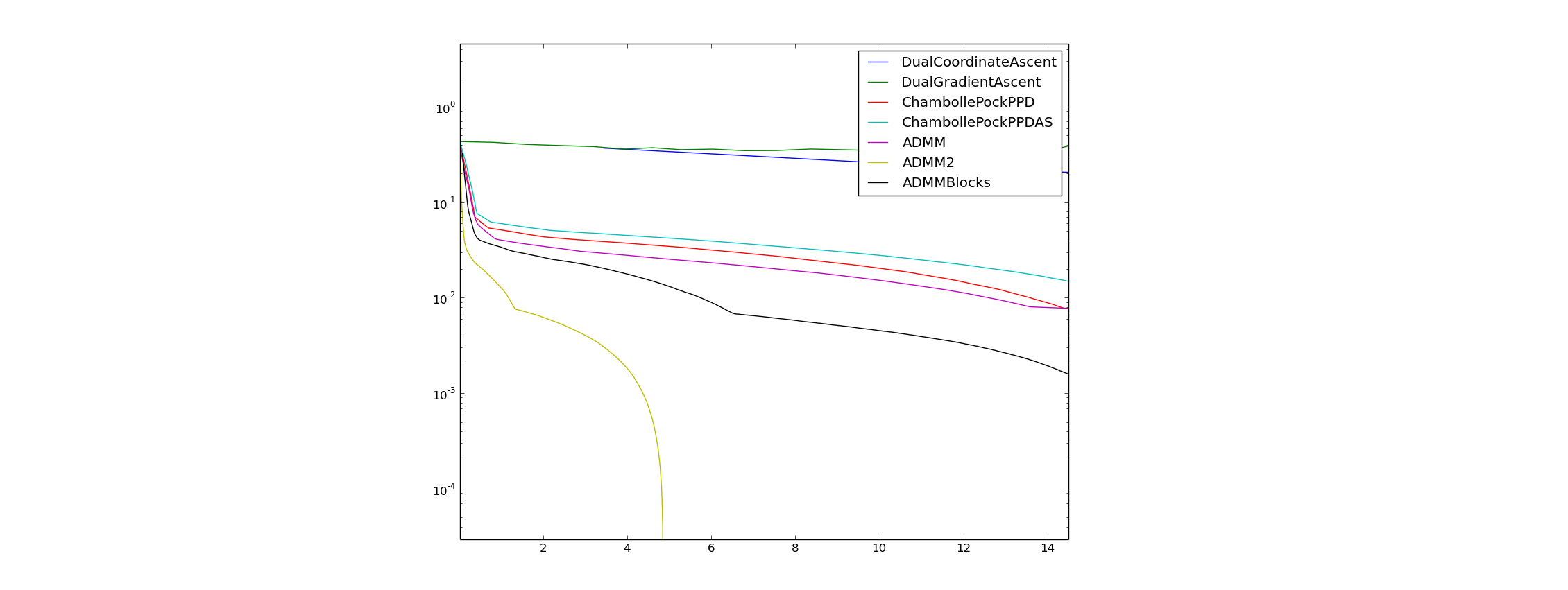

The Primal-Dual hybrid gradient (PDHG) method is a powerful optimization scheme that breaks complex problems into simple sub-steps. Unfortunately, PDHG methods require the user to choose stepsize parameters, and the speed of convergence is highly sensitive to this choice. We introduce new adaptive PDHG schemes that automatically tune the stepsize parameters for fast convergence without user inputs. We prove rigorous convergence results for our methods, and identify the conditions required for convergence. We also develop practical implementations of adaptive schemes that formally satisfy the convergence requirements. Numerical experiments show that adaptive PDHG methods have advantages over non-adaptive implementations in terms of both efficiency and simplicity for the user.

PDF Abstract